Mobile violent & gore ads have become a growing challenge for developers and publishers who prioritize user trust and quality. Users increasingly notice graphic and disturbing ad content, which negatively affects their experience. This graphic and often illegal content has consequences for your company’s reputation and negatively affects retention. Understanding how to effectively block and report ads with images of violence and gore from games is essential, especially in apps designed for children.

This article provides mobile developers with insights into practical solutions, focusing on dedicated tools that streamline ad quality management, including strategies tailored specifically to prevent violent and graphic imagery from appearing in mobile children’s games, harming your reputation and revenue streams. Read on to discover unique approaches from our industry experts at AppHarbr to proactively protect your users, elevate your ad experience, and secure your app’s reputation.

What Are Mobile Violent Ads and Why Are They Appearing?

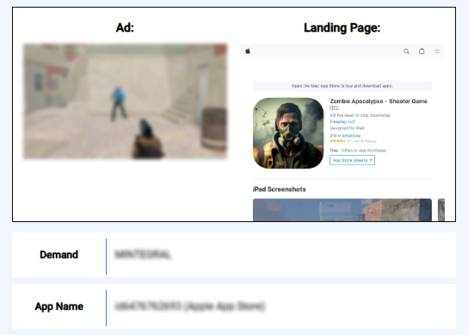

Mobile violent ads have become disruptive for both developers and users. These are ads that depict graphic violence, gore, crime, weapon imagery or war, aggressive combat scenes, or explicit injuries, and they appear unexpectedly within mobile games and other apps for children. Several factors contribute to this issue, such as automated advertiser targeting priorities, poor content filtering standards from networks and platforms, or bad-faith actors who knowingly serve images or videos of crime and illegal activity to an audience of children. For these reasons, implementing proactive ad quality solutions and automated detection tools is critical for studios, especially ones that publish children’s games.

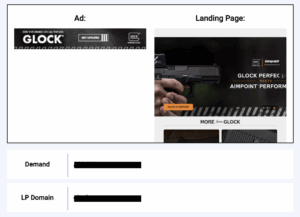

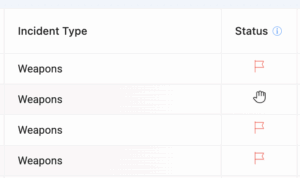

Figure 1: Advertisement for Violent Shooting Game with Corresponding Landing Page

The Risks and Dangers of Violent Ads in Mobile Games

Violent and graphic ads can cause significant psychological and behavioral harm to users, such as anxiety, trauma, and desensitization, particularly troubling for kids and younger audiences. Such content is not only a problem for children on the receiving end; developers face damage to their reputation, negative reviews, lost user trust, retention problems, potential regulatory fines, and reduced monetization potential. Proactive content filtering solutions that target graphic ads prevent these outcomes, enabling sustainable growth.

When it comes to advertisers promoting weapons, crime, and similar explicit behavior, their placement in front of the wrong audience can have severe consequences for your business. While excessive display of such content may be off-putting to adults, resulting in churn, the issue is more severe when it targets children. Google Play and the Apple App Store have very strict community guidelines when it comes to what kinds of advertisements can be promoted in children’s apps. Serving ads that promote weapons or crime can result in penalties to the publisher, such as suspension from the App Store or permanent de-listing. Because of the high stakes when it comes to serving ads to children, reactive ad quality management alone cannot secure ad quality.

Beyond player discomfort, there are serious business and compliance issues. A recent report found that many mobile-game ads include “extreme scenes of blood, weaponry, bone-breaking, dismemberment, and even beatings leading to death.” The consequences of serving these ads run deep:

1. User experience & retention

A survey of mobile gamers revealed that 58% would quit a game immediately because of disruptive ads, and 84% would uninstall the app after repeated exposure. When the ad content features violence or weapons in contexts inconsistent with the app’s brand—especially children’s titles—the damage to retention is compounded.

2. Brand & reputation damage

Regulatory commentary from the Advertising Standards Authority confirms mobile-game ads that depict sexual violence or weapon-based dominance are likely to be ruled unacceptable. This means your company’s perceived responsibility goes beyond gameplay quality.

3. Revenue and performance impact

Poor ad creatives, especially those that generate shock or churn harm user sentiment and hurt monetisation. Deloitte notes on mobile gaming that disruptive ad formats undermine user trust and thus reduce long-term engagement and monetisation potential. With violent or weapon-heavy ad content often contributing to “disruptive” perception, the connection to revenue risk is direct.

Protecting Young Users: Automated Tools to Block Violent and Gore Ads

Developers should integrate real-time, proactive detection that ensures your audience is spared from unwanted content. While users are able to install their own ad blockers on their own or their kids’ devices, the onus is ultimately on the company to create a safe and positive user experience and serve only appropriate and intended ad content. Doing so requires an integrated solution that stops inappropriate content in real time before it reaches the user and creates reports as needed. This kind of advanced system is what safeguards your business from churn and revenue losses. AppHarbr’s robust, lightweight solution streamlines this process for developers and ad ops teams, reducing the manual human hours needed to achieve these goals.

Leveraging Advanced Ad SDKs to Filter Weapon Imagery and Graphic Violence

Specialized in-app solutions like AppHarbr employ AI and machine learning technology that accurately detect and report ads that promote weapon imagery and crime. While a company may choose to create its own solution, human efforts can’t keep up with the speed at which advertisers are serving these creatives in children’s games. Additionally, by the time developers learn of these problematic creatives, the harm has already been caused to the users and to your business; users or parents leave complaints, turn to social forums, and delete their accounts.

Establish Ad Quality Control to Prevent Graphic and Violent Content

Leveraging targeted ad quality solutions eliminates the need for endless manual QA and creates a streamlined system for effectively detecting violent ads and eliminating them before reaching users, particularly crucial in kids’ apps. Effective ad quality management doesn’t require your team to target problematic advertisements with human manual efforts one by one. These measures allow developers to identify, analyze, and rapidly address any instance of violent or inappropriate ads served to children and avoid policy violations that harm users and business goals.

In-App Protection: Detect and Stop Violent Ads Before They Reach

Traditional methods that developers use to answer the issue only solve half the problem. While reporting single examples of images and videos that promote illegal activity can prevent the creative from targeting children a second time, much of the damage has already been done to your business. Parents complain, users churn, and in extreme cases, suspension or delisting from the Apple App Store or Google Play Store is possible. Implementing real-time, automated detection and filtering SDKs with proactive monitoring dashboards such as AppHarbr, clear guidelines for advertisers, and user-friendly mechanisms to report advertisements creates robust in-app protection frameworks are essential.

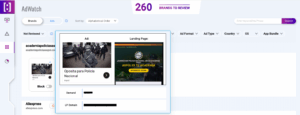

These comprehensive approaches create a streamlined and secure process for your company to manage depiction of violence, crime, and illegal activity in ads, significantly reduce the possibility of policy violations for your game, and automate the intervention process. All the details and data of what’s advertised are visible in your account––including categories, company, and date––and support streamlined QA for your company. By reducing the need for human intervention, AppHarbr gives your developers back the time and energy, erasing the need for endless damage control.

How to Report and Remove Offensive Combat and Gore Ads

Combat and gore ads have no place being advertised in games targeted toward children, and serving such content is not inevitable. AppHarbr’s advanced technology gives you transparent access to all the programmatic activity occurring in your children’s app. It allows you to proactively block inappropriate creatives and report creatives and advertiser sources as needed, rather than spending endless hours chasing the source of the issue. Documenting these points and communicating expectations to advertisers reduces incidents of explicit content and enhances overall compliance.

Best Practices to Permanently Stop Graphic Imagery and Violent Ads in Mobile Games

Many ad monetization teams are currently relying on a process that requires trying to keep up with inappropriate advertising by searching for and manually reporting each incident, spending hours tracking down the involved advertisers or platforms to try and prevent the issue from occurring again. This is not a sustainable method for safeguarding your ad quality. Developers must leverage AI-powered ad detection solutions, maintain productive communication with their platforms, and continuously optimize through analytics to collectively form a sustainable strategy to stop graphic, violent ads from reaching children.

Taking Action Against Violent Ads: Protect Users, Strengthen Your Brand, and Boost Revenue

Tackling violent ads in mobile games requires proactive solutions and integrated technology to protect users effectively. By deploying AppHarbr’s advanced ad quality solution, game developers and publishers make a point to sustainably protect their brands, enhance user experience, bolster retention, and ensure reliable monetization. Stay up to date with an automated solution that takes away the heavy lifting.

Ready to safeguard your users from violent and graphic ads? Visit AppHarbr today for additional information and a comprehensive demonstration of our industry-leading ad quality, moderation, and SDK solutions designed specifically for proactive content filtering and user protection.